One of the largest news stories over the past month was the grounding of Boeing 737 MAX-8 and MAX-9 aircraft after an Ethiopian Airlines crash resulted in the deaths of everyone on board. This is the second deadly crash of involving a Boeing 737 MAX. A Lion Air Boeing 737 MAX-8 crashed in October 2018, also killing everyone on board. As a result of these two crashes, Boeing 737 MAX airplanes have been temporarily grounded in over 41 countries, including China, the US, and Canada. Boeing also paused delivery of these planes, although they are continuing to produce them.

I have been following the Boeing 737 MAX story closely. It serves as an interesting case study on software and systems engineering, human factors, corporate behavior, and customer service.

Note: At the time this essay was written, the Lion Air and Ethiopian Airlines crashes were still under investigation. Ultimately, everything you are reading about these crashes and that I discuss here is still in the realm of speculation. However, the situation is serious enough and well-enough understood that Boeing is addressing the problem immediately.

Table of Contents:

- Brief Background on the 737 MAX

- The Suspected Problem

- Compounding Factors

- Boeing’s Response

- Is This the Result of Bad Software?

- Lessons We Can Apply to Our Systems

- Could This Happen in Your Company?

- Further Reading

- Acknowledgments

Brief Background on the 737 MAX

Before diving into the suspected problem with the 737 MAX, I need to set the stage with some background information about the aircraft.

The Boeing 737 is the best-selling aircraft in the world, with over 15,000 planes sold. After Airbus announced an upgrade to the A320 that provided 14% better fuel economy per seat, Boeing responded with the 737 MAX. Boeing sold the 737 MAX as an “upgrade” to the famed 737 design, using larger engines for improved fuel efficiency (also by 14%). Boeing claimed that the 737 MAX operated and flew in the same way as the 737 NG, so pilots licensed to fly the 737 NG did not need additional training and simulator time for the 737 MAX.

Because Boeing increased the engine size to improve fuel efficiency, the engines needed to be positioned higher up on the plane’s wings and slightly forward of the old position. Higher nose landing gear was also added to provide the same ground clearance as the 737NG.

The larger engines and new positions destabilized the aircraft, but not under all conditions. The engine housings were designed so they do not generate lift in normal flight. However, if the airplane is in a steep pitch (e.g., takeoff or a hard turn), the engine housings generate more lift than on previous 737 models. Depending on the angle, the airplane’s inertia can cause the plane to over-swing into a stall.

To address the increased stall risk, Boeing developed a software solution: the Maneuvering Characteristics Augmentation System (MCAS). No other commercial plane uses a system like the MCAS, though Boeing uses a similar MCAS system on the KC-46 Pegasus military aircraft.

The MCAS is part of the flight management computer software. The pilot and co-pilot each have their own flight computer, but only one has control at a time. The MCAS takes readings from the angle of attack (AoA) sensor to determine how the plane’s nose is pointed relative to the oncoming air. The MCAS monitors airspeed, altitude, and AoA. When the MCAS determines that the angle of attack is too great, it automatically performs two actions to prevent a stall:

- Command the aircraft’s trim system to adjust the rear stabilizer and lower the nose

- Push the pilot’s yoke in the down direction

The movement of the rear stabilizer varies with the speed of the plane. The stabilizer moves more at slower speeds and less at higher speeds.

By default, the MCAS is active when:

- AoA is high (ascent, steep turn)

- Autopilot is off

- Flaps are up

The MCAS will deactivate once:

- The AoA measurement is below the target threshold

- The pilot overrides the system with a manual trim setting

- The pilot engages the CUTOUT switch, which disables automatic control of the stabilizer trim

If the pilot overrides the MCAS with trim controls, it will activate again within five seconds after the trim switches are released if the sensors still detect an AoA over the threshold. The only way to completely disable the system is to use the CUTOUT switch and take manual trim control.

Note this important point: Boeing designed the MCAS to not turn off in response to a pilot manually pulling the yoke. Doing so would defeat the original purpose of the MCAS, which is to prevent the pilot from inadvertently entering a stall angle.

I highlight this point because a natural reaction to a plane that is pitching downward is to pull on the yoke. You are applying a counter-force to correct for the unexpected motion. For normal autopilot trim or runaway manual trim, pulling on the yoke does what you expect and triggers trim hold sensors.

We are under the impression that the column, yoke, steering wheel, gas pedal, and brakes fully control the response of the mechanical system. This is an illusion. Modern aircraft, like most modern cars, are “fly-by-wire”. Gone are the days of direct mechanical connections involving cables and hydraulic lines. Instead, most of the connections are purely electrical and typically mediated by a computer. In many ways we are being continually “guarded” by the computers that mediate these connections. It can be a terrible shock when the machine fights against you.

The Suspected Problem

The MCAS is suspected to have played a significant role in both crashes.

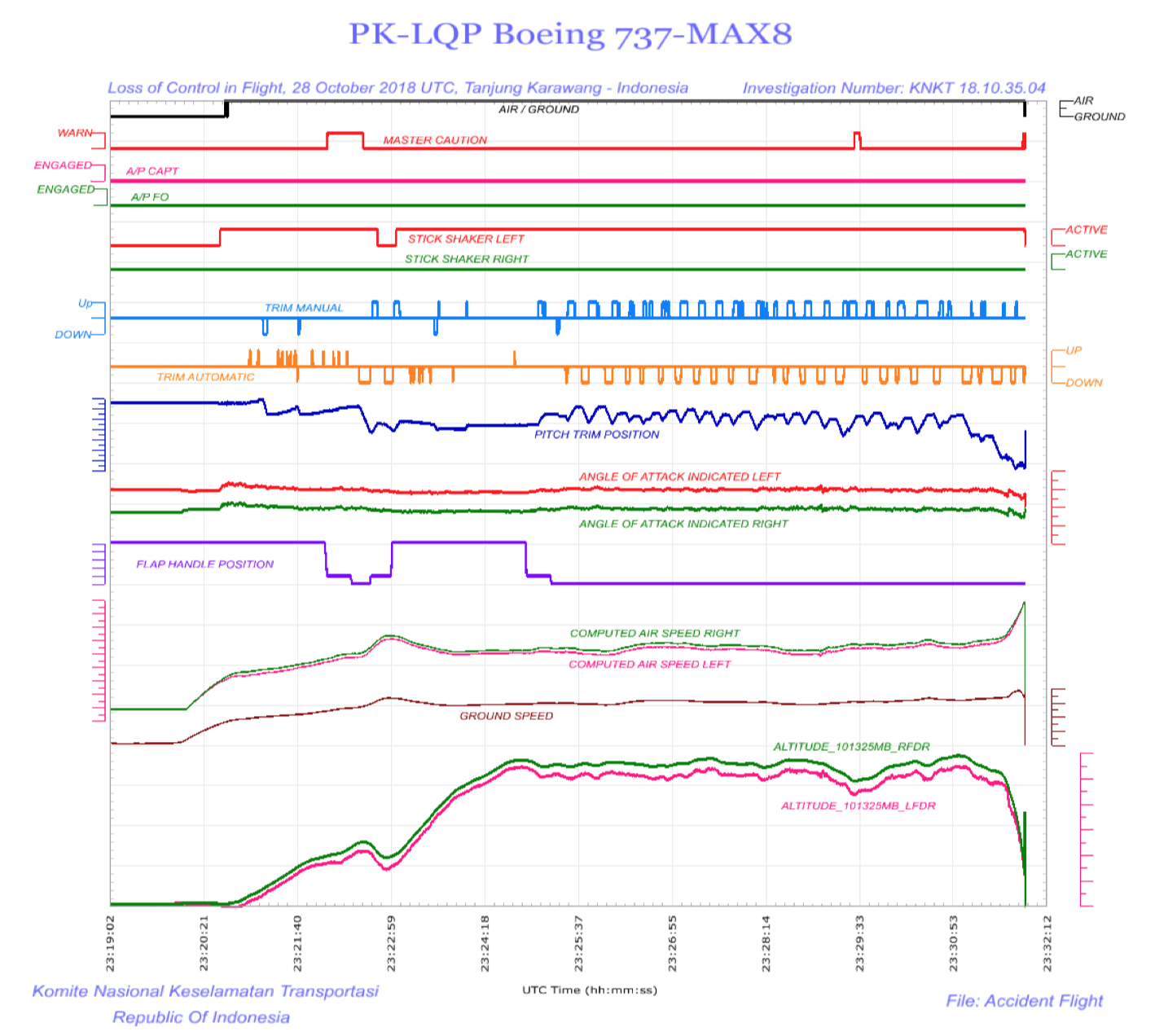

During Lion Air flight JT610, MCAS repeatedly forced the plane’s nose down, even when the plane was not stalling. The pilots tried to correct by pointing the nose higher, but the system kept pushing it down again. This up-and-down oscillation happened 21 times before the crash occurred. The Ethiopian Airlines crash shows a similar pattern. The Ethiopian Airlines CEO said that they believed that the MCAS was active during the Ethiopian Airlines crash.

Image from the Lion Air crash preliminary report. Notice how the Automatic Trim was forcing the aircraft down, and the pilots countered by pointing it back up. https://news.aviation-safety.net/2018/11/28/ntsc-indonesia-publishes-preliminary-report-on-jt610-boeing-737-max-8-accident/

If the plane wasn’t actually stalling, or even close to a stall angle, why was MCAS engaged?

AoA sensors can be unreliable, which is a suggested factor in the Lion Air crash, where there was a 20-degree discrepancy in AoA sensor readings. The MCAS only reads the AoA sensor on its corresponding side of the plane. The MCAS reacts to the reading faithfully and does not cross-check the other sensor to confirm the reading. If a sensor goes haywire, the MCAS has no way of knowing.

If the MCAS was enabled erroneously, why did the pilots not disable the system?

This is where the situation becomes muddled. The likeliest explanation for the Lion Air pilots is that they had no idea that the MCAS existed, that it was active, or how they could disable it.

Remember, the MCAS is a unique piece of software among commercial airplanes; it only runs on the 737 MAX. Boeing sold and certified the 737 MAX as a minor upgrade to the 737 body, which would not require pilots to re-certify or spend time training in simulators. As a result, it seems that the existence of the MCAS was largely kept quiet. The MCAS is mentioned only once by name in the original flight manual – in the glossary. Pilots from American Airlines and Southwest Airlines say they were not told about its existence or trained in its use.

“We do not like the fact that a new system was put on the aircraft and wasn’t disclosed to anyone or put in the manuals.”

- Jon Weaks, president of Southwest Airlines Pilots Association

“This is the first description you, as 737 pilots, have seen. It is not in the AA 737 Flight Manual Part 2, nor is there a description in the Boeing FCOM (flight crew operations manual). It will be soon.”

- Message to APA from Capt. Mike Michaelis

After the Lion Air crash, Boeing released a bulletin providing details on how the system worked and how to counter-act it in case of malfunction. Boeing announced that the MCAS could move the stabilizer by 2.5 degrees. This movement limit applies separately for each time the MCAS is activated. Boeing confirmed that MCAS can move the stabilizer to its full downward position if the pilot did not counteract it with manual trimming or completely cutting out the system. With a limit of 2.5 degrees, two cycles of MCAS without pilot correction is enough to reach full downward position.

Boeing also said that emergency procedures that applied to earlier 737 models would have corrected the problems observed in the Lion Air crash.

The Lion Air pilots likely fought against an automated system that was working against them. The system is most likely to activate at low altitudes, such as during takeoff, leaving the pilots little time to react. Their search through the technical manuals proved unsuccessful.

The Ethiopian Airlines pilots had heard about MCAS thanks to the bulletin, although one pilot commented, “we know more about the MCAS system from the media than from Boeing”. Ethiopian Airlines installed one of the first simulators for the 737 MAX, but the pilot of the doomed flight had not yet received training in the simulator. All we know at this time is that the pilot reported “flight control problems” and wanted to return to the airport and that the Ethiopian Airlines crash resembles the Lion Air crash. We must wait for the preliminary report for more details.

Compounding Factors

Based on our current knowledge, the first-level analysis leads us to believe that the MCAS system was poorly designed and caused two plane crashes.

It’s not quite that simple. This is a complex situation, involving many people and organizations. Other pilots have struggled against the MCAS system and safely guided their passengers to their destination.

The following contributing factors play out time-and-again in other systems.

Poor Documentation

As I mentioned, after the Lion Air crash, pilots complained that they were not told about the MCAS or trained in how to respond when the system engages unexpectedly. This lack of documentation or training is especially dangerous when you are fighting against an automated system and your previous training does not fully apply (recall that pulling on the yoke to hold against the trim does not work against the MCAS). Even worse, Lion Air pilots attempted to find answers in their manuals before they crashed.

Pilots take their documentation extremely seriously. Below are three reports from the Aviation Safety Reporting System (ASRS), which is run by NASA to provide pilots and crews with a way to report safety issues confidentially.

The reports highlighted below focus on the insufficiency of Boeing 737 MAX documentation. I’ve bolded some sentences for emphasis.

ACN 1593017

Synopsis:

B737MAX Captain expressed concern that some systems such as the MCAS are not fully described in the aircraft Flight Manual.

Highlights from the narrative:

This description is not currently in the 737 Flight Manual Part 2, nor the Boeing FCOM, though it will be added to them soon. This communication highlights that an entire system is not described in our Flight Manual. This system is now the subject of an AD.

I think it is unconscionable that a manufacturer, the FAA, and the airlines would have pilots flying an airplane without adequately training, or even providing available resources and sufficient documentation to understand the highly complex systems that differentiate this aircraft from prior models. The fact that this airplane requires such jury rigging to fly is a red flag. Now we know the systems employed are error prone–even if the pilots aren’t sure what those systems are, what redundancies are in place, and failure modes.

I am left to wonder: what else don’t I know? The Flight Manual is inadequate and almost criminally insufficient. All airlines that operate the MAX must insist that Boeing incorporate ALL systems in their manuals.

ACN 1593021

Synopsis:

B737MAX Captain reported confusion regarding switch function and display annunciations related to “poor training and even poorer documentation”.

Highlights from narrative:

This is very poorly explained. I have no idea what switch the preflight is talking about, nor do I understand even now what this switch does.

I think this entire setup needs to be thoroughly explained to pilots. How can a Captain not know what switch is meant during a preflight setup? Poor training and even poorer documentation, that is how.

It is not reassuring when a light cannot be explained or understood by the pilots, even after referencing their flight manuals. It is especially concerning when every other MAINT annunciation means something bad. I envision some delayed departures as conscientious pilots try to resolve the meaning of the MAINT annunciation and which switches are referred to in the setup.

ACN 1555013

Synopsis:

B737 MAX First Officer reported feeling unprepared for first flight in the MAX, citing inadequate training.

Highlights from narrative:

I had my first flight on the Max [to] ZZZ1. We found out we were scheduled to fly the aircraft on the way to the airport in the limo. We had a little time [to] review the essentials in the car. Otherwise we would have walked onto the plane cold.

My post flight evaluation is that we lacked the knowledge to operate the aircraft in all weather and aircraft states safely. The instrumentation is completely different – My scan was degraded, slow and labored having had no experience w/ the new ND (Navigation Display) and ADI (Attitude Director Indicator) presentations/format or functions (manipulation between the screens and systems pages were not provided in training materials. If they were, I had no recollection of that material).

We were unable to navigate to systems pages and lacked the knowledge of what systems information was available to us in the different phases of flight. Our weather radar competency was inadequate to safely navigate significant weather on that dark and stormy night. These are just a few issues that were not addressed in our training.

Even worse, it appears that the FAA’s System Safety Analysis document was also incorrect:

The original Boeing document provided to the FAA included a description specifying a limit to how much the system could move the horizontal tail — a limit of 0.6 degrees, out of a physical maximum of just less than 5 degrees of nose-down movement. […] That limit was later increased after flight tests showed that a more powerful movement of the tail was required to avert a high-speed stall, when the plane is in danger of losing lift and spiraling down.

After the Lion Air Flight 610 crash, Boeing for the first time provided to airlines details about MCAS. Boeing’s bulletin to the airlines stated that the limit of MCAS’s command was 2.5 degrees. That number was new to FAA engineers who had seen 0.6 degrees in the safety assessment.

“The FAA believed the airplane was designed to the 0.6 limit, and that’s what the foreign regulatory authorities thought, too,” said an FAA engineer. “It makes a difference in your assessment of the hazard involved.”

I understand the pilots’ concern, given that the MCAS could move the tail 4x farther than stated in the official safety analysis. What else is undocumented or documented incorrectly?

Rushed Release

I would bet that all engineers are familiar with rushed releases. We cut corners, make concessions, and ignore or mask problems – all so we can release a product by a specific date. Any problems are downplayed, and those that are observed by the customer can be fixed later in a patch.

Apparently, the 737 MAX was subject to the same treatment. Here are some key highlights from the article:

- The FAA delegates some certification and technical assessments to airplane manufacturers, citing lack of funding and resources to carry out all operations internally

- FAA managers have final authority on what gets delegated to the manufacturer

- Boeing was under time pressure, because development of the 737 MAX was nine months behind the new A320neo

- FAA technical experts said in interviews that managers prodded them to speed up the process

- FAA safety engineer who was involved with certifying the 737 MAX was quoted saying that halfway through the certification process:

- “We were asked by management to re-evaluate what would be delegated. Management thought we had retained too much at the FAA.”

- “There was constant pressure to re-evaluate our initial decisions. And even after we had reassessed it […] there was continued discussion by management about delegating even more items down to the Boeing Company.”

- “There wasn’t a complete and proper review of the documents. Review was rushed to reach certain certification dates.”

- If there wasn’t time for FAA staff to complete a review, FAA manages either signed off on the documents themselves or delegated the review to Boeing

- As a result of this rushed process, a major change slipped through the process:

- The System Safety Analysis on MCAS claims that the horizontal tail movement is limited to 0.6 degrees

- This number was found to be insufficient for preventing a stall in worst-case scenarios

- The number was increased 4x to 2.5 degrees

- The FAA was never told about this changed, and FAA engineers did not learn about it until Boeing released the MCAS bulletin following the Lion Air crash

The New York Times corroborates this rushed released:

- “The pace of the work on the 737 Max was frenetic, according to current and former employees who spoke with The New York Times.”

- “The timeline was extremely compressed,” the engineer said. “It was go, go, go.”

- “One former designer on the team working on flight controls for the Max said the group had at times produced 16 technical drawings a week, double the normal rate.”

- “Facing tight deadlines and strict budgets, managers quickly pulled workers from other departments when someone left the Max project.”

- “Roughly six months after the project’s launch, engineers were already documenting the differences between the Max and its predecessor, meaning they already had preliminary designs for the Max — a fast turnaround, according to an engineer who worked on the project.”

- “A technician who assembles wiring on the Max said that in the first months of development, rushed designers were delivering sloppy blueprints to him. He was told that the instructions for the wiring would be cleaned up later in the process, he said.”

- “His internal assembly designs for the Max, he said, still include omissions today, like not specifying which tools to use to install a certain wire, a situation that could lead to a faulty connection. Normally such blueprints include intricate instructions.”

- “Despite the intense atmosphere, current and former employees said, they felt during the project that Boeing’s internal quality checks ensured the aircraft was safe”

- “This program was a much more intense pressure cooker than I’ve ever been in,” he added. “The company was trying to avoid costs and trying to contain the level of change. They wanted the minimum change to simplify the training differences, minimum change to reduce costs, and to get it done quickly.”

I’ve worked on many fast-paced engineering projects. I’ve observed and personally made compromises to meet deadlines, and there are many that I disagreed with. All of these points are familiar and hit home. I was quite surprised to find that the culture that builds aircraft would be so similar to the culture that builds consumer electronics.

Delayed Software Updates

Weeks after the Lion Air crash, Boeing officials told the Southwest Airlines and American Airlines pilot’s unions that they planned to have software updates available around the end of 2018.

“Boeing was going to have a software fix in the next five to six weeks,” said Michael Michaelis, the top safety official at the American Airlines pilots union and a Boeing 737 captain. “We told them, ‘Yeah, it can’t drag out.’ And well, here we are.”

The FAA told The Wall Street Journal that FAA work on the new MCAS software was delayed for five weeks by the government shutdown. However, the “enhancement” was submitted to the FAA for certification on 21 January, only four days before the shutdown ended.

The official software update was announced four months later than the initial estimate. It will still take many more months to approve and deploy.

We are all conditioned to waiting for fixes and updates. Teams are prone to giving idealistic estimates. Problems take longer than expected to diagnose, correct, and validate. Schedules are repeatedly overrun.

However, it’s not going to comfort the families of those who lost their lives on Ethiopian Airlines Flight 302 that Boeing released a software fix for certification seven weeks before the fatal crash. There is a real cost to the delay of software updates, and that cost increases significantly with the impact of the issue. It is always better to take the necessary time to implement a robust design in order to avoid needing a patch at all.

Humans Were Out of the Loop

One uncomfortable computing fact remains true: humans are superior at dynamically receiving and synthesizing data.

Computers can only perform actions they were already programmed to do. A computer cannot take in additional data which it wasn’t already programmed to read. The MCAS was designed to use a single data point, that of the AoA sensor on the corresponding side of the plane. The initial NTSC report on the Lion Air crash tells us that a single faulty AoA sensor triggered the MCAS.

If a pilot or co-pilot noticed a strange AoA reading (such as a 20-degree difference between the left and right AoA sensors), he or she could perform a “cross check” by glancing at the reading on the other side of the plane. Additional sensors and gauges can be read to corroborate or disprove a strange AoA reading. Hell, a pilot could even look out the window to get a sense of the plane’s angle. The pilots could have a discussion and collectively determine which sensor they trusted. Our brains can take in any combination of this information and confirm/disprove a sensor reading.

What is even more troubling is that the system’s behavior was opaque to the pilots. According to Boeing, the MCAS is (counter-intuitively) only active in manual flight mode, and is disabled when under autopilot. MCAS controls the trim without notifying the pilots that it is doing so.

Boeing did provide two optional features that would provide more insight into the situation:

- An AoA indicator, which displays the sensor readings

- An AoA disagree light, which lights up if the two AoA sensors disagree

But because these were optional, many carriers did not elect to buy them.

In a fight between an unaware human pilot and the MCAS, the MCAS has a fair chance of winning. Even if the pilot disables MCAS by setting a manual trim, MCAS would automatically kick back in if the high AoA reading was still detected. Combined with the fact that the MCAS could move the stabilizer 2.5 degrees per activation, it could continue to push the aircraft nose down until the stabilizer’s force could no longer be overcome by the pilot’s input.

Because of our superiority at dynamic information synthesis, humans must maintain the ability to override or overpower an automated process. At present, nothing in the world is as skilled at dealing with complexity and chaos as the human mind.

Boeing’s Response

We’ve pointed a lot of fingers at Boeing, let’s take a moment to review what they are doing in response.

An MCAS software update has been announced:

Boeing has developed an MCAS software update to provide additional layers of protection if the AOA sensors provide erroneous data. The software was put through hundreds of hours of analysis, laboratory testing, verification in a simulator and two test flights, including an in-flight certification test with Federal Aviation Administration (FAA) representatives on board as observers.

The following changes will be made:

- Flight control system will now compare inputs from both AOA sensors

- If the sensors disagree by 5.5 degrees or more with the flaps retracted, MCAS will not activate

- An indicator on the flight deck display will alert the pilots to AoA Disagree condition

- This was previously a paid upgrade, but now will now ship as a standard feature

- MCAS will also be disabled and if the AoA Disagree displayed with the AoA differs more than 10° for over 10 seconds during flight

- If MCAS is activated in non-normal conditions, it will only provide one input for each elevated AOA event

- There are no known or envisioned failure conditions where MCAS will provide multiple inputs.

- MCAS can never command more stabilizer input than can be counteracted by the flight crew pulling back on the yoke.

- The pilots will continue to always have the ability to override MCAS and manually control the airplane

In addition to the software changes, there are extensive training changes. Pilots will have to complete 21+ days of instructor-led academics and simulator training. Computer-based training will be made available to all 737 MAX pilots, which includes the MCAS functionality, associated crew procedures, and related software changes. Pilots will also be required to review the new documents:

- Flight Crew Operations Manual Bulletin

- Updated Speed Trim Fail Non-Normal Checklist

- Revised Quick Reference Handbook

Boeing and the FAA participated in an evaluation of the software and 12 March test flight. Boeing will now work on getting the update approved for installation by the various airworthiness authorities around the world. I expect this to be a long road to approval after Boeing and the FAA destroyed their store of trust.

All of these actions seem correct to me as an engineer and systems builder. But I am crestfallen that they weren’t included in the initial release.

Is This the Result of Bad Software?

It’s very tempting to label the 737 MAX crashes as “caused by software.” At some level, this is true. However, the MCAS appears to be a software patch applied to a larger systems problem (and a hastily assembled patch at that).

Let’s walk through the chain that appears to have led us here:

- Fuel is expensive, and we want more efficient engines to reduce that burden

- Airbus was improving their aircraft, which placed pressure on Boeing to respond with their own improved platform

- The timeline was largely dictated by Airbus, not the time Boeing engineers needed to complete the project

- Boeing wanted to stick to the 737 platform for a variety of reasons:

- Faster time to market

- Lower cost for producing and certifying a new plane

- Pilot familiarity, leading to reduced training requirements for airlines

- Boeing sold the 737 MAX to airlines on the ideals of increased fuel efficiency, platform familiarity, and lower upgrade costs

- Bigger engines did not fit on the existing 737 platform, so modifications were needed:

- Move the engines forward

- Mount the engines higher

- Increase the height of the front landing gear

- These modifications changed the aerodynamics of the airplane, which should have changed certification requirements and required more training

- Instead Boeing created the MCAS to address the aerodynamic impact of the new design

- Boeing downplayed the MCAS system, which resulted in:

- Improper/insufficient certification

- Insufficient documentation

- Pilots received no training for handling the new 737 MAX

This is a systems engineering problem created by the company’s design goals. Boeing’s guiding light was to reuse the 737 platform so they could keep up with Airbus and minimize training requirements. Redesigning the airplane was entirely out of the question because it would give Airbus a significant time advantage and necessitate expensive training. To meet the design goals and avoid an expensive hardware change, Boeing created the MCAS as a software band-aid.

This scenario is quite familiar to me. As a firmware engineer, applying software workarounds for silicon or hardware design flaws is a major part of my work. Fixing hardware is “expensive” in terms of both time and money. At some point it’s too late to change the hardware (or so I’ve been repeatedly told). The schedule drives the decision to move forward with known hardware design flaws.

The next line is predictable: “The problem will just have to be fixed in software.” But software fixes do not always work. When the software workaround fails, we seem to forget that we were already attempting to hide a problem.

I am not alone in the view that this is not a “software problem”. Trevor Sumner had an excellent Twitter Thread where he summarized the thoughts of Dave Kammeyer. Trevor’s take extends beyond the Boeing analysis and even includes non-software factors leading to the Lion Air crashes (re-formatted for easier reading):

On both ill-fated flights, there was a:

- Sensor problem. The AoA vane on the 737MAX appears to not be very reliable and gave wildly wrong readings. On #LionAir, this was compounded by a:

- Maintenance practices problem. The previous crew had experienced the same problem and didn’t record the problem in the maintenance logbook. This was compounded by a:

- Pilot training problem. On LionAir, pilots were never even told about the MCAS, and by the time of the Ethiopian flight, there was an emergency AD issued, but no one had done sim training on this failure. This was compounded by an:

- Economic problem. Boeing sells an option package that includes an extra AoA vane, and an AoA disagree light, which lets pilots know that this problem was happening. Both 737MAXes that crashed were delivered without this option. No 737MAX with this option has ever crashed. All of this was compounded by a:

- Pilot expertise problem. If the pilots had correctly and quickly identified the problem and run the stab trim runaway checklist, they would not have crashed.

His closing point is austere (emphasis mine):

Nowhere in here is there a software problem. The computers & software performed their jobs according to spec without error. The specification was just shitty. Now the quickest way for Boeing to solve this mess is to call up the software guys to come up with another band-aid.

I’ve watched the “fix it in software” cycle play out repeatedly when developing iPhones. Should we be surprised that the same happens for an airplane too? What would prevent it, the idea of a safety culture? Can you ever be truly safe when you are optimizing for time-to-market and reduced costs.

After the resulting deaths, loss in market cap, and destruction of trust, one must wonder if Boeing will ever realize the cost and time savings they hoped the software fix would provide.

Note: We should leave open the possibility that there is a compounding software issue at play, since there are ASRS reports which indicate problems that occurred with autopilot on, a scenario where MCAS is supposed to be inactive.

Lessons We Can Apply to Our Systems

A complex system operated in an unexpected manner, and 346 people are dead as a result of two tragic and catastrophic accidents. Though the lives cannot be restored, if many systems and software engineers can learn as much as possible about this case, such deaths can be prevented in the future.

These are the lessons that I’ve learned from this investigation so far:

- You Cannot Bend Complex Systems to Your Will

- Where You are Aiming is the Most Important Thing

- Treat Documentation as a First-Class Citizen

- Keep Humans in the Loop

- Testing Doesn’t Mean You Are Safe

You Cannot Bend Complex Systems to Your Will

Boeing took an existing complex system and tried to change that system to force a specific outcome. Systems thinkers everywhere are cringing at this, because all changes to complex systems have unintended consequences.

Donna Meadows said in “Dancing with Systems”:

But self-organizing, nonlinear, feedback systems are inherently unpredictable. They are not controllable. They are understandable only in the most general way. The goal of foreseeing the future exactly and preparing for it perfectly is unrealizable. The idea of making a complex system do just what you want it to do can be achieved only temporarily, at best. We can never fully understand our world, not in the way our reductionistic science has led us to expect. Our science itself, from quantum theory to the mathematics of chaos, leads us into irreducible uncertainty. For any objective other than the most trivial, we can’t optimize; we don’t even know what to optimize. We can’t keep track of everything. We can’t find a proper, sustainable relationship to nature, each other, or the institutions we create, if we try to do it from the role of omniscient conqueror.

Donna continues:

Systems can’t be controlled, but they can be designed and redesigned. We can’t surge forward with certainty into a world of no surprises, but we can expect surprises and learn from them and even profit from them. We can’t impose our will upon a system. We can listen to what the system tells us, and discover how its properties and our values can work together to bring forth something much better than could ever be produced by our will alone.

These thoughts are echoed by Dr. Russ Ackoff in a short talk titled “Beyond Continual Improvement”. The points he makes in that brief fifteen minutes repeatedly echoed in my head while writing this essay.

A system is not the sum of the behavior of its parts, it is a product of their interactions. The performance of a system depends on how the parts fit, not how they act taken separately.

Boeing changed a few individual parts of the plane and expected the overall performance to be improved. But the effect on the overall system was more complex than the changes led them to expect.

When you get rid of something you don’t want (remove a defect), you are not guaranteed to have it replaced with what you do what.

We are all familiar with the experience of fixing a bug, only to have a new bug (or several) appear as a result of our fix.

Finding and removing defects is not a way to improve the overall quality or performance of a system.

The larger engines on the 737 airframe resulted in undesirable flight characteristics (excessive upward pitch at steep AoA). Boeing responded by attempting to address this defect with the MCAS. It’s clear that the MCAS does not unilaterally improve the overall quality or performance of the aircraft.

What aspects of your system are you trying to force? Perhaps you can broaden your perspective and look at different approaches. The answer will reveal itself if you listen, though you might have to head in a different direction than you originally intended.

Where You are Aiming is the Most Important Thing

There is an idea that I’ve been holding in the forefront of my mind: nothing has more of an impact on where you will eventually end up as where you are aiming. Setting the right aim is the most important thing.

It seems to me that Boeing’s aim was to keep up with Airbus, leading to an aggressive time-to-market. They also wanted to minimize changes to ease certification and ensure that pilots did not need to receive new training. Those are the principles that appear to have guided their actions. Safety was still a concern, but that is not what the organization, system, or schedule focused on.

Dr. Ackoff echoes this idea in “Beyond Continual Improvement”

Basic principle: an improvement program must be directed at what you want, not at what you don’t want

At one level, we can say that Boeing wanted a new aircraft with improved fuel efficiency to compete with Airbus.

At another level, what Boeing wanted was to design a new aircraft with improved fuel efficiency, but in such a way as to not require a new airframe design, to not require a timeline that delayed them significantly with regards to the Airbus launch, and to not require pilots to receive training on the new airplane.

Boeing seems to have focused heavily on the things they did not want out of the improved design.

If you stick to the base level of desire (wanting a new aircraft with improved fuel efficiency), it seems that the system needed to be largely redesigned with a new airframe to support larger engines.

Your company’s aim is a truly powerful force. Your organization is headed in only that direction.

Ask yourselves often: is it the proper aim?

Treat Documentation as a First-Class Citizen

If other people will use your product, you need to treat documentation as a first class citizen. Useful and comprehensive documentation and training is extremely important to your users and the engineers and managers that come after you.

Pilots are fanatical about their documentation, as well they should be. There is clear and documented outrage that details were kept from them.

In this case, improved documentation would have led to better understanding of the system forces at work. Improved documentation alone could have potentially saved hundreds of lives.

We try to hold back because we think our users don’t need (or can’t handle) the details:

One high-ranking Boeing official said the company had decided against disclosing more details to cockpit crews due to concerns about inundating average pilots with too much information – and significantly more technical data – than they needed or could digest.

Software teams often take this view of their users. Perhaps it is simply a rationalization for not wanting to put the effort into creating and maintaining documentation. How can we predict what information people need to know? What is too technical, and what is enough information? Won’t the details change as the system evolves? How will we keep it maintained?

When we leave out documentation or fudge the explanations of how things work, we hinder our users. What could your users accomplish with your system if they had a full understanding of how it worked? I guarantee they can handle and achieve much more than you expect.

Software teams also hinder themselves when they neglect documentation. When we document, we are acting as explorers, mapping uncharted territory. New team members can learn how the system is designed. Ideas for simplification will jump out at you. You’ll start thinking about novel ways to use your software and the edge cases that will be encountered. Poorly understood system aspects are suddenly obvious – “here be dragons”.

It’s a popular adage: if you can’t explain something in simple terms, you don’t understand it. And if you don’t explain something, nobody else has a chance of understanding it.

Keep Humans in the Loop

I stated earlier that humans must maintain the ability to override or overpower an automated process. Because of our superiority at dynamic information collection and synthesis, we can improvise and make novel decisions in response to new situations. A computer, which has been preprogrammed to read from a limited amount of information and perform a set of specific responses, is not (yet) capable of improvising.

“What we have here is a ‘failure of the intended function,’ going back to your recent piece [on SOTIF — Safety of the Intended Functionality]. Barnden said, “A plane shouldn’t fight the pilot and fly into the ground. This is happening after decades of R&D into aviation automation, cockpit design and human factors research in planes

System designers and programmers are not all-knowing. Make sure that humans are kept in the loop – let them override your automated processes. Perhaps they know better after all.

Testing Doesn’t Mean You Are Safe

Phil Koopman recently wrote about a concept he calls The Insufficient Testing Pitfall:

Testing less than the target failure rate doesn’t prove you are safe. In fact you probably need to test for about 10x the target failure rate to be reasonably sure you’ve met it. For life critical systems this means too much testing to be feasible.

No doubt about it: the airplane and software were tested. Probably significantly. Certainly in simulators and in test flights. But it seems that Boeing did not test the system enough to encounter these problems. And even if they did – what other problems would still be missed?

We need a plan for proving that our software works safely. Testing is not enough.

Could This Happen in Your Organization?

It’s easy for us to read about the Boeing 737 MAX saga, or other similar human-caused disasters, and think that we would never have walked down the path that led there. I implore you to have sympathy and understanding. Humans committed those actions. You are also human. You (and the organizations you are a part of) are capable of the same actions, for the same reasons. Keep the possibility of catastrophe in mind when you are tempted to let standards slide.

All of this is familiar to me as an engineer. I’ve worked on many fast-paced engineering projects. I’ve observed and personally made compromises to meet deadlines: some I proposed myself, and others that I disagreed with. I’ve seen these compromises work out, and I’ve seen them fail spectacularly. I got lucky. I don’t work on safety critical software, and I have never watched people die at the hands of my systems. I have deep sympathy for the engineers who will be forever plagued by their creation.

After the Lion Air crash, Boeing offered trauma counseling to engineers who had worked on the plane. “People in my group are devastated by this,” said Mr. Renzelmann, the former Boeing technical engineer. “It’s a heavy burden.”

We must also remember that nobody at Boeing wanted to trade human lives for increased profits. All human organizations – families, companies, industries, governments – are complex systems and have a life of their own. The organization can make and execute a decision which none of the participants truly want, such as shipping a compromised product or prioritizing profits over safety.

What I see with Boeing is an organization that made the same kind of decisions that I regularly see made at every organization I’ve been a part of. Like at all of these other organizations, they did not escape the consequences of their decisions. The difference for Boeing is that they were playing for bigger stakes, and the result of their misplaced bet is more painful.

There was not villainous a CEO who forced his minions to compromise the product. There was not an entire organization whose individuals decided to collectively disregard safety. The organization rallied around the goals of time-to-market and minimizing required pilot training. Momentum and inertia kept the company marching toward their aim, even if individuals disagreed. And perhaps nobody explicitly noticed that safety was de-prioritized as a result.

I want to repeat this: Boeing made the same decisions that are being made everywhere else.

We all have a duty to aim higher.

Further Reading

For more on the Boeing 737 MAX Saga:

- JT610 Crash Preliminary Report

- What is the Boeing 737 Max Maneuvering Characteristics Augmentation System?

- US Joins Other Nations in Grounding Boeing Plane

- The World Pulls the Andon Cord on the 737 MAX

- Flawed analysis, failed oversight: How Boeing, FAA certified the suspect 737 MAX flight control system

- Boeing was ‘Go, Go, Go’ to Beat Airbus with the 737 MAX

- Here’s What Was on the Record About Problems With the 737 Max

- Boeing to Make Key Change in 737 MAX Cockpit Software

- Boeing details changes to MCAS and training for 737 Max

- Boeing Promised Pilots a 737 Software Fix Last Year, but They’re Still Waiting

- FAA had initial version of Boeing’s proposed 737 MAX software fix seven weeks before the Ethiopian crash

- Boeing details its fix for the 737 MAX, but defends the original design

- Boeing’s automatic trim for the 737 MAX was not disclosed to the pilots

- Doomed Boeing Jets Lacked 2 Safety Features That Company Sold Only as Extras

- Boeing issues 7373 Max fleet bulletin on AoA Warning after Lion Air Crash

- Confusion, then Prayer, in Cockpit of Doomed Lion Air Jet

- After a Lion Air 737 Max Crashed in October, Questions About the Plane Arose

- FAA Evaluates a Potential Design Flaw on Boeing’s 373 MAX after Lion Air crash

- US Rebukes Boeing Over Tanker

- Boeing 737 MAX Crashes: Everything You Need to Know

- Lion Air Flight 610

- Lion Air 737 MAX Crew Had Seconds to React, Boeing Simulation Finds

- Lion Air pilots scoured handbook in minutes before crash

Commentary on the situation:

- Three Implications of the 737 MAX Crashes

- Can Boeing Trust Pilots?

- Don’t Ground the Airplanes, Ground the Pilots

- What the MAX Story Says About Safety Oversight Today

- The 737Max and Why Software Engineers Might Want to Pay Attention

- Boeing’s B737 Max and Automotive ‘Autopilot’

- 737 MAX Analysis

- Trevor Sumner’s Twitter Thread on Systemic Causes of the 737 MAX Crashes

Thoughts on Autonomy and Safety:

- The Fly-Fix-Fly Antipattern for Autonomous Vehicle Validation

- The Insufficient Testing Pitfall for Autonomous System Safety

- Dealing with Edge Cases in Autonomous Vehicle Validation

- Credible Autonomy Safety Argumentation

Acknowledgments

Our creations are never the result of a single mind.

I want to thank Rozi Harris and Stephen Smith for reviewing early drafts of this essay. Their feedback, conversation, and exploration of the topics at hand has been extremely helpful. Many of their discussion points were incorporated into the essay.

Thanks to Nicole Radziwil for reviewing the article and making edits and corrections.

Thank you to the hard-working journalists and aviation fanatics who have published brilliant coverage and analysis for the 737 MAX saga. I know only a fraction of what others know about the problems discussed herein.

I also want to thank all of my colleagues who stood beside me over the years. It takes a monumental effort to build something new, and it rarely works out. We should all be amazed at our combined human triumph.

The lessons I present are hard-won, collectively generated, and the result of long debates. I hope the next generation of creators can use them to move beyond our current capabilities.

Excellent Article ! I am sharing it on my blog.

The statement that only Boing MAX has MCAS is inaccurate. Similar system installed on aerial refueling aircraft Boeing KC-46 Pegasus. And on Pegasus they use two angle of attack sensors instead of one for redundancy.

You are correct and I was aware of that. I meant to state "commercial airplane". I will update the article.

Also, I recall reading that the USAF was unhappy with Boeing after the 737 MAX groundings. Do you know if there were any incidents with the KC-46, or are they just reviewing the training materials?

It also sounds like the KC-46 disabled the MCAS system in response to a pilot pulling on the yoke, and was also configured so the pilots could override the system.

Interesting that one implementation avoided many of the problems described herein.

I am not a aviation expert. But brief internet research shows that KC-46 is new aircraft and first delivery was just January 2019. And U.S. Air Force stopped accepting planes on March 23rd 2019 because they found foreign object debris or FOD inside the planes. This follows a similar stoppage on March 1st that was triggered by debris discovered in several airplanes, including loose tools and unwanted materials. https://www.popularmechanics.com/military/aviation/a27032708/air-force-stops-deliveries-of-trash-filled-kc-46-tankers-yet-again/

My guess than KC-46 has more serious issue than debris (maybe similar to 737 MAX). But for political reason they use FOD as complaint.

As an older, senior software developer, I’ve seen bad software as a growing problem over the two decades, especially with the puzzle-like erector-set toy IDE called object oriented programming (OOP). With OOP, the model of the airplane is only secondary to the abstract model that is OOP. Objects are most often related; this fact then perhaps makes sense when an object was triggered and then even as the pilots turned off the MCAS, it made almost no difference, the objects were already triggered.

Amazing article-So Comprehensive-filling in MANY GAPS….that never made ANY sense…in all media coverage-I continually believed SOME info was Missing-as The story reported (I read anything and everything) NEVER made sense…after your article? I felt SANE—as The analysis, you have made complete sense…and it was also?…. Compassionate.

Pilots disengaged MCAS but it re-engaged against the will of the Pilots, took control of the Plane and Crushed the Plane. If MCAS senses another potential stall (even if it’s the result of another malfunction) AFTER the pilots believe they have shut off MCAS, the feature was designed to take control of the aircraft again and will THEN OVERRIDE THE CUTOUT SWITCH. At that point, the only way to shut off MCAS is to completely uninstall the software while in flight.

Fantastic article throughout! I would add – it is also a software problem. When taking in input, if the values diverge wildly, the software should recognize that the input is faulty and not act upon it.

The only issue I have is: "I would bet that all engineers are familiar with rushed releases. We cut corners, make concessions, and ignore or mask problems – all so we can release a product by a specific date. "

As an engineer, I have indeed seen this problem, but we don’t cut corners or mask problems on our own initiative. It’s caused by managers getting frustrated with the delays from the engineering department. I’ve been told to stop being a perfectionist, just wrap it up and get it out. Just like the engineers whose complaints were ignored before the Space Shuttle Challenger blew up, I would bet these engineers knew full well that the MCAS should be tested more, that there should be safety checks built into the software, etc.

Thanks Phillip, I enjoyed reading your post and hope more details emerge on the decision making from within Boeing over time. Managers and regulatory bodies should take note of the impact to public trust and brand damage if holes are left open in the processes designed to keep the public safe.

Please correct reference to A330 to A320neo in the background section. A330 is a different class of airplane to the 737 and 320.

For other aircraft that have AoA sensors like the Airbus they have 3 of them, so that if one is defective, two will be accurate. Also, in Airbus this only provides feedback to the pilots but no direct automatic action by the plane since the planes are not designed to stall due to the AoA effects.

https://www.bloomberg.com/amp/news/articles/2019-04-11/sensors-linked-to-737-crashes-vulnerable-to-failure-data-show

Updated, thanks for letting me know.

First, this is very sad beyond words. My condolences to the families and lost ones.

Profit incentives to meet schedules must be re-evaluated when it comes to safety critical system. Humans were very in the loop when it came to this stuff… the cool-aid mentality can become dangerous.

Also, how is it possible with the hundreds of PHDs and very smart engineers there is a single point of failure for the entire MCAS system?

Complexity for the sake of complexity seems to be driven by ego and perhaps job protection. Designs must be kept as simple as possible for the given problem at hand. It can become complex when needed and when it directly adds value. (and not for a budget spreadsheet).

https://martinfowler.com/articles/is-quality-worth-cost.html

Reading about the 737 MAX reminded me of Nancy Leveson’s book "Engineering A Safer World", where she points out that safety is really a total system property (the system being not just the product, but the entire development, manufacturing, operating, and regulatory context in which it exists). Preventing further accidents is therefore not just fixing one thing.

Her book is free to download, and I highly recommend it. You can read my review of it (with download link) at https://flinkandblink.blogspot.com/2019/07/review-engineering-safer-world-by-nancy.html.

Isn’t that a specification problem?